I’ve built enough production systems to know when a technology shift is real and when it’s just noise.

You’re probably tired of chasing the latest framework or tool only to watch it fade six months later. The problem isn’t that you’re not paying attention. It’s that the signal-to-noise ratio in software development keeps getting worse.

Here’s what actually matters right now: the technologies reshaping how we build applications aren’t the ones getting the most headlines. They’re the ones quietly changing what’s possible in production environments.

I’ve spent years working with advanced computing protocols and AI-driven systems in real-world scenarios. Not demos. Not proof-of-concepts. Actual production code serving actual users.

This article cuts through the hype. I’ll show you which innovations deserve your time and which ones you can safely ignore.

You’ll learn what separates a passing trend from a foundational shift. And you’ll get a clear roadmap for implementing the strategies that actually create a competitive advantage.

No buzzwords. No fluff. Just what works when you’re building systems that need to perform under pressure.

Excntech tracks these shifts as they happen. We test new approaches in real environments and share what we learn.

The AI Co-Pilot: Augmenting Developer Expertise, Not Replacing It

I’m tired of hearing the same tired debate.

Will AI replace developers? Should we all just give up and learn to farm?

Here’s what actually bugs me. People who’ve never written a line of production code love to make sweeping claims about how AI will do everything. Meanwhile, those of us in the trenches know the reality is way more complicated.

You know what’s really frustrating? Spending three hours writing boilerplate code for the hundredth time. Or debugging the same validation logic you’ve written in five different projects.

That’s where AI coding assistants actually help.

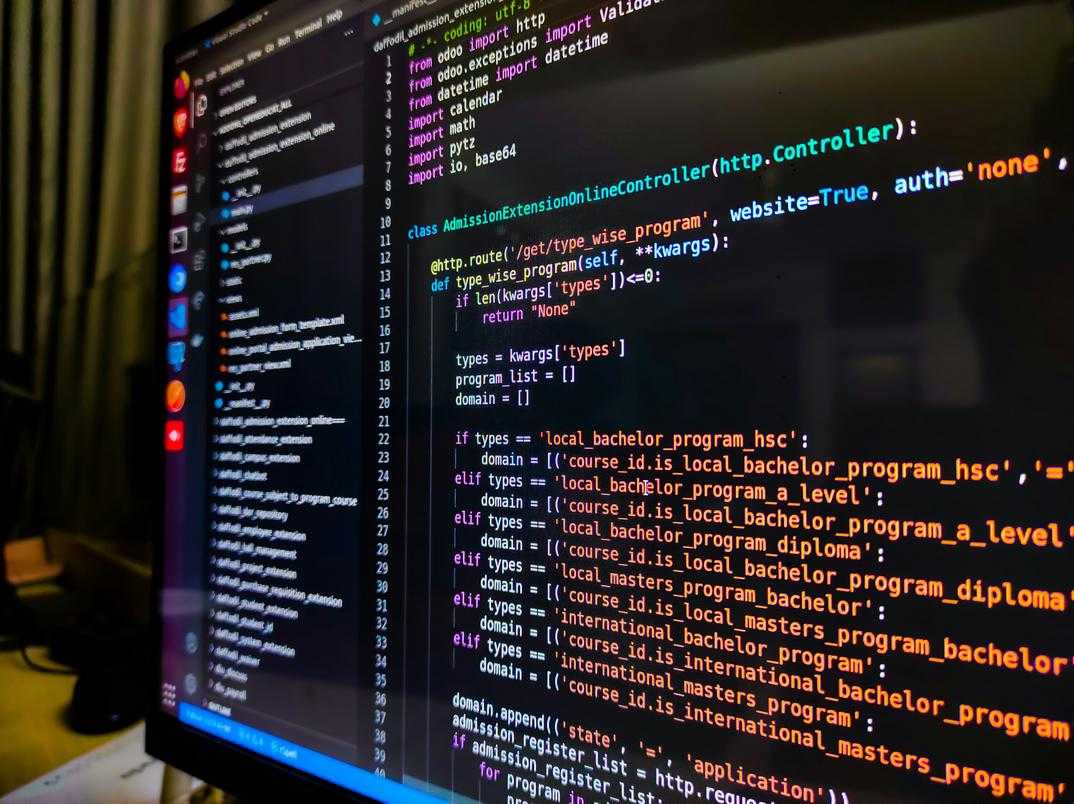

Tools like GitHub Copilot don’t replace what I do. They handle the repetitive stuff so I can focus on problems that actually require thinking. When I’m building a microservice, the AI can generate my data validation schemas and basic API endpoints while I work on the business logic that matters.

Some developers say this makes us lazy. That we’re losing fundamental skills by letting AI write code for us.

But think about it. We stopped writing assembly code for most applications decades ago. Did high-level languages make us worse programmers? Or did they free us up to build more complex systems?

The shift happening right now at excntech and across software development excntech communities is about changing what expertise means. I’m not just writing code anymore. I’m engineering prompts, integrating AI models, and making strategic decisions about system architecture.

AI-driven testing tools now catch bugs I might miss after staring at code for eight hours straight. Anomaly detection spots patterns in production that would take me weeks to find manually.

What frustrates me most? Developers who refuse to adapt because they think using AI assistance somehow diminishes their skills. Meanwhile, they’re still manually writing the same CRUD operations they’ve written a thousand times before.

The job isn’t disappearing. It’s evolving. And honestly, it’s getting more interesting.

Advanced Computing Protocols: The New Language of Microservices

REST has been the go-to for years.

But if you’re building microservices that need to handle thousands of requests per second, you’ve probably hit its ceiling.

I’m not saying REST is dead. It’s just not built for what we’re asking it to do anymore.

Here’s what’s happening.

When you’re running internal service-to-service communication, REST’s text-based JSON payloads start to drag. The overhead adds up fast. You end up burning CPU cycles just parsing data.

Some developers say we should stick with what works. REST is simple, they argue. Everyone knows it. Why complicate things?

Fair point. But I think they’re missing where this is headed.

gRPC is changing the game for internal traffic. It uses HTTP/2 and Protocol Buffers instead of JSON. The result? Smaller payloads and faster serialization. I’ve seen latency drop by 60% just from switching (Google’s own benchmarks back this up).

It’s perfect for east-west traffic between your services. Not so great for public APIs where you need browser support.

Then there’s GraphQL.

It flips the script on who controls the data shape. Instead of your API deciding what to send, the client asks for exactly what it needs. No more pulling in 50 fields when you only want three.

Mobile apps love this. Less data over the wire means faster load times and happier users.

Here’s my prediction. Within two years, most new microservice architectures will use a mix of all three protocols. gRPC for internal calls, GraphQL for client-facing APIs, and REST for simple public endpoints.

So how do you choose?

Ask yourself: Is this a public or private API? Public usually means REST or GraphQL. Private means gRPC is worth considering.

What are your performance needs? If you’re pushing high throughput with low latency requirements, gRPC wins.

How complex is your data? If clients need different subsets of the same resource, GraphQL makes sense.

I write about this stuff regularly in my excntech technology updates from eyexcon because the software development excntech landscape keeps shifting.

The protocol you pick today shapes your architecture for years. Choose based on your actual constraints, not what’s trendy.

From Cloud to Edge: Architecting for a Decentralized World

You’ve probably heard the term “edge computing” thrown around in tech circles.

But what does it actually mean for you as a developer?

Here’s the simple version. Edge computing means processing data closer to where it’s created instead of sending everything to a distant cloud server. Think of it like this: instead of calling headquarters for every decision, you empower local teams to act fast.

The difference matters.

Cloud vs Edge: When Each Makes Sense

Cloud computing works great when you need massive processing power and can tolerate a few hundred milliseconds of delay. You send your data up, it gets processed, and results come back.

Edge computing flips that model. The processing happens right there on the device or nearby. No round trip to a data center.

For most web apps? Cloud is fine. For a self-driving car making split-second decisions? You can’t wait for cloud latency.

I’ve seen developers struggle with this choice. They default to cloud because it’s familiar, then hit a wall when real-time performance becomes critical.

Industrial IoT sensors need edge processing because sending millions of data points to the cloud every second gets expensive fast. Autonomous vehicles can’t afford the 50-100ms delay of a cloud round trip when avoiding obstacles. Augmented reality applications fall apart if there’s lag between your movement and what you see.

But edge computing brings its own headaches.

State management gets messy when you have hundreds of devices making independent decisions. How do you keep them in sync? Data synchronization between edge and cloud isn’t straightforward when network connections drop randomly. And securing distributed endpoints? That’s a whole different beast than protecting a centralized server.

(You can’t just slap a firewall on it and call it done.)

WebAssembly is changing how we think about edge deployment. It lets you run the same code across different devices with near-native performance and built-in sandboxing. I’m watching developers use Wasm to deploy secure applications on everything from smartphones to industrial controllers.

The tips for software developers excntech approach here is simple: start with cloud, move to edge only when you have a clear reason.

Don’t architect for decentralization just because it sounds cool.

Platform Engineering: The Essential Strategy for Scaling Innovation

You know what drives me crazy?

Watching talented developers spend half their day fighting with infrastructure instead of building things that matter.

I see it everywhere. Smart people who could be shipping features are stuck wrestling with Kubernetes configs. Or trying to figure out why their CI/CD pipeline broke again. Or hunting down the right person to ask about deployment permissions.

It’s a waste. And honestly, it’s fixable.

That’s where platform engineering comes in. At its core, it’s about building an Internal Developer Platform (IDP) that gives your team self-service capabilities. Think of it as creating the tools and systems that let developers do their jobs without constantly asking for help.

Here’s what that actually means.

Instead of making developers learn every detail of your cloud infrastructure, you abstract away the complexity. They don’t need to become AWS experts just to deploy a service. The platform handles that.

The same goes for CI/CD pipelines and observability tooling. Your platform team builds these once, the right way, and everyone else just uses them.

Now, some people will say this is overkill. They’ll tell you developers should understand the full stack, that abstracting things away makes them lazy or less capable.

I disagree.

Sure, understanding infrastructure is valuable. But there’s a difference between understanding and having to manually configure everything from scratch every single time. That’s not building character. That’s just slow.

What actually works is something called the Golden Path. Here’s how it breaks down:

- Your platform team identifies the most common tasks developers need to do

- They build supported, well-tested paths for those tasks

- Developers follow those paths and get things done fast

Spinning up a new service? There’s a path for that. Deploying to production? Already built. Need monitoring and logs? It’s there waiting for you.

The result? Your team moves faster. Way faster.

But speed isn’t the only win here. When you improve Developer Experience (DX) through good software development excntech, you see real business impact. Time-to-market shrinks because people aren’t blocked waiting for infrastructure. Developer retention goes up because nobody wants to quit over frustrating tooling. And your software delivery becomes more reliable because everyone’s using the same tested paths instead of reinventing wheels.

The bottom line is simple. Platform engineering isn’t about babying your developers. It’s about removing the friction that keeps them from doing their best work.

Turning Technological Advancement into a Competitive Advantage

We’ve covered the four pillars that are reshaping how teams build software today.

AI-augmentation changes how developers write code. Advanced protocols speed up data exchange. Edge architectures bring processing closer to users. Platform engineering ties it all together.

You know what happens when you fall behind. Your competitors ship faster and your users expect more.

The gap widens every quarter.

But here’s the thing: you don’t need to adopt everything at once. Strategic moves beat rushed implementations every time.

These advancements work because they solve real problems. Your teams build faster. Your applications handle more load. Your users get better experiences.

Start with one area. Pick the biggest pain point your team faces right now.

Is it developer productivity? Look at AI-augmentation tools. Are your APIs slow? Test advanced protocols. Do you need better performance at scale? Try edge architectures.

Run a pilot. Measure the results. Then expand what works.

software development excntech gives you the strategies you need to stay ahead. The technology exists and it’s proven.

Your next step is simple: identify one area and test one approach. That’s how you turn advancement into advantage. Homepage.